AI is now a part of how teams work everyday. Many organizations now use copilots, chatbots, and internal assistants to help users find information, reduce manual searching, and manage growing documentation. These tools boost productivity, but they also bring a common problem. Sometimes, a general AI model sounds confident but gives information that does not match your product, terminology, or documentation.

Retrieval-augmented generation, or RAG, helps fix this issue by making sure AI uses approved content. Rather than relying only on training data, the model pulls information from chosen sources to create its answers. This approach reduces mistakes, keeps answers in line with your documentation, and gives teams more control over how AI works in content workflows.

What Is Retrieval Augmented Generation

Retrieval augmented generation combines search and generation. The system starts by finding relevant information from a set of approved documents. It then uses this content as context for its answer. This helps keep responses close to your reviewed and validated material.

A typical RAG workflow follows a simple sequence:

- A user asks a question.

- The system searches a controlled set of documents.

- It retrieves the most relevant passages or components.

- The model uses this material as context.

- The final answer is generated from that context.

How RAG Works Step by Step

Most RAG systems follow a clear pattern:

- The user asks a question in a chatbot, copilot, or help interface.

- The system searches approved repositories or documentation sets.

- It retrieves topics or components that best match the query.

- The model uses only the retrieved content to generate an answer.

- The response may include references to the original source.

By using verified information, RAG reduces errors and makes AI responses more consistent

Why RAG Matters for Technical Content and Documentation

Documentation teams need accuracy, version control, and clear terminology. If an AI model is not grounded, it might add information that looks right but does not match the product. RAG helps avoid this by making the system use approved content that already fits your release cycle and documentation standards.

Key advantages for documentation environments include:

- Better alignment with real product behavior

- Reduced inconsistencies across channels

- More predictable responses from AI tools

- Faster access to approved information

- Fewer incorrect or incomplete answers

- Clearer expectations for how AI should behave

RAG works best when your content is structured, organized, and reusable. Teams with a strong content foundation usually get more accurate results and better performance.

You don’t just need better content. You need control over how that content is delivered across channels and systems.

RAG Compared to Out-of-the-Box AI Models

General AI models use broad training data. They can explain ideas, summarize text, and give examples, but they do not know your product or documentation by default. This can lead to answers that sound confident but are not always correct.

RAG avoids this problem by making the model use content your team has approved. The model relies on your documentation instead of guessing.

How RAG Supports AI Governance

As more teams use AI, it is important to know how responses are created. RAG helps with governance by making sure the model uses approved, trusted content sources and that answers meet the same review standards as your documentation.

RAG also supports version control and audit history. When the model gives an answer, teams can see which documents were used, making things more transparent and helping with compliance. This is part of the infrastructure required to deliver controlled, reliable AI at scale.

Governance depends on more than retrieval. It requires control over how content is delivered to AI systems and where that content is sourced from. Platforms like MadCap Syndicate provide that delivery layer, ensuring AI tools only access approved, up to date content across channels.

Key Building Blocks of a Strong RAG Implementation

A RAG system works best when the content is organized and consistent. Many organizations think AI model performance matters most, but the quality of retrieval depends more on how content is written and stored.

Controlled delivery endpoints that manage how content is accessed by AI systems

Important building blocks include:

- Machine-readable, structured content

- Consistent formatting and terminology

- Accurate and complete metadata

- Centralized content repositories

- Defined access and permissions

- Version control across updates

- Reusable components and predictable structures

These steps are part of the structured content practices that support today’s authoring and publishing workflows.

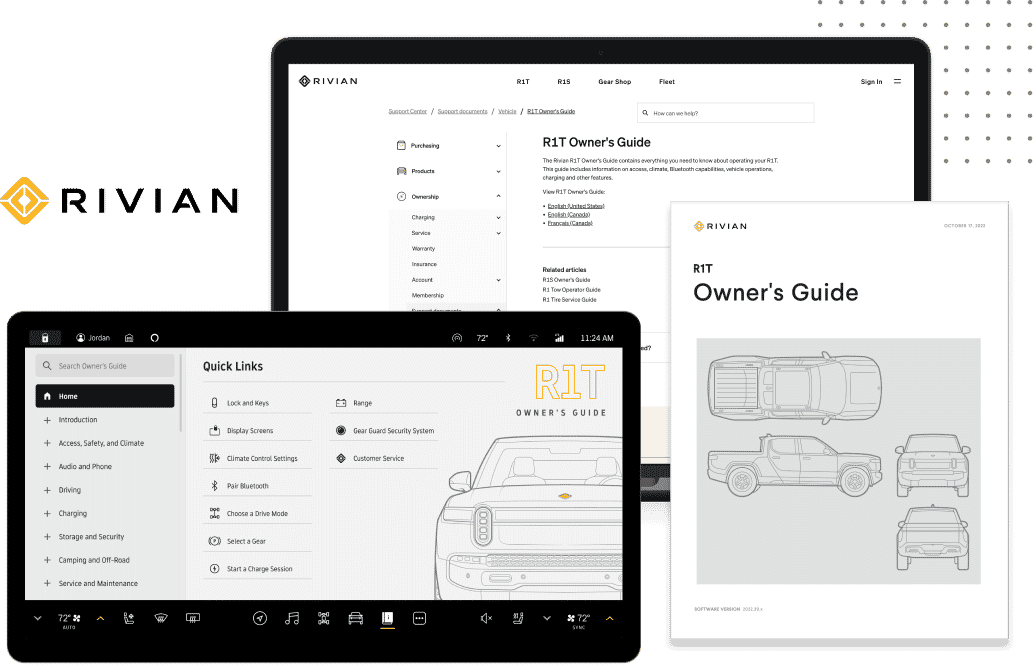

Where RAG Shows Up in Real Workflows

RAG can make documentation and support more accurate. It is not just for technical AI teams and can help any workflow that needs consistent, verified information.

Common applications include:

- Documentation copilots that surface related topics

- Internal knowledge assistants that retrieve approved answers

- Customer-facing chatbots

- Support portals and troubleshooting guides

- In product help panels

- Training and onboarding tools built from existing content

All these workflows depend on predictable, reviewed information, so RAG is a great choice for documentation teams. It is also a core part of building an effective AI content strategy.