When I first started at CM Labs, one thing was apparent: we needed MadCap Flare.

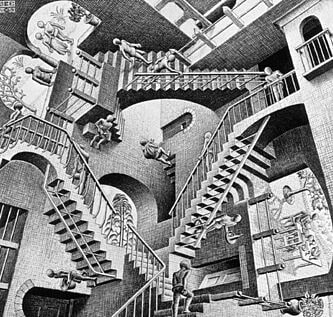

Having used Flare at previous jobs, I recognized it to be the premiere tool for authoring attractive SME documentation quickly and easily. Unfortunately, the help system I inherited was a DITA/Doxygen system predicated upon a dizzying array of scripts that called each other to build a CHM output.

It was the batch file equivalent of an M.C. Escher work.

After making my case for Flare, I was off to the races, modernizing the system while making it easier to add and edit topics (not to mention producing output much quicker than before). I even used its handy CHM importing feature to quickly port over the old help into shiny new HTML5.

But the writing tool wasn't the only thing that needed updating (I'm looking at you, reviews!).

Reviews

Company principles dictated that we have traceability in all things, including documentation reviews. We needed a clear way to follow changes from A to B, and B to C, so that we could always go back and follow the logic that brought us to the end point. This would be useful if ever a feature were to roll back, or perhaps if a new writer came on board and needed to understand the evolution of a chapter.

The system I found in place often used PDF files for reviews, which wasn't ideal. The subject matter expert (SME) would review a specially generated PDF and write comments in the file and send it back to the writer (sometimes, even hand-annotated PDF printouts were also used). This was problematic in at least a couple ways:

- My work is entirely web-based, so generating a special PDF created extra overhead.

- It was hard to follow review iterations, and that back and forth was not reliably recorded anywhere.

Confluence

We use Atlassian Confluence at work as a collaborative information platform, and I've heard of some tech writers elsewhere who use this system for their reviews. An SME would write an initial outline, the tech writer would use that information as the basis of a topic, then use the commenting system in Confluence to communicate back and forth with the SME concerning any changes.

This system has some perks in that you can tag coworkers so they're notified about any pertinent comments or even wholesale changes to the page itself. The page history functionality also gives you the ability to see the content's progression over time, giving the traceability we desired.

However, in my experience, opening up comments on a Confluence page often leads to digressions, those lengthy conversations in the margins that can continue for some time without any clear resolution about the content, leaving the technical writer in limbo.

Also, it's not great for reviews of modified, pre-existing content, as it would require the tech writer to copy text from Flare, paste it into Confluence, then paste it back in Flare once reviewed.

JIRA

After putting our heads together, my managers and I came up with a solution that better fit our workflow and company principles: JIRA.

If you're not familiar with JIRA, it's another project management product from Atlassian that provides bug and issue tracking, My documentation issues are created as “stories” in JIRA, laying out my tasks in a clear list or table view. Since everyone in the company is using this tool, why not integrate it with doc reviews?

The idea was that we'd create a review file and attach it to the relevant JIRA issue. I then add a comment, tagging the SME, asking him/her to please review the attached file. It's easy, traceable and uses tools with which coworkers are already familiar.

The next question: what kind of file to attach? To continue using annotated PDFs was an inelegant solution to the problem.

MadCap Contributor

That's where MadCap Contributor comes in. Contributor is a content review tool that specifically integrates with Flare, making SME reviews seamless.

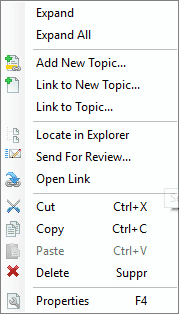

To get the ball rolling, the tech writer:

- Right-clicks a topic (or a group of topics)

- Selects Send For Review....

- Saves the resulting FLTREV file

- Attaches the FLTREV file to the JIRA issue and tags the SME

In fact, to help out my co-workers, I wrote a Documentation Review Process post in Confluence, the steps of which I've reproduced below:

- The technical writer attaches the review package (.fltrev file) to the JIRA issue in question, with the writer's initials and revision number of the package added to the file name.

- The SME picks up the package from the JIRA issue.

- The SME opens the package in MadCap Contributor and adds comments where necessary. (Select "Use Free Review" if this is your first time installing Contributor.)

- The SME re-attaches the annotated package (the FLTREV file) to the JIRA issue, replacing the writer's initials with his/her own, leaving the revision number as is.

- The technical writer re-imports the package into MadCap Flare and makes any necessary changes.

- If necessary, another iteration of this process occurs, with the revision number increased by one.

I always make sure to helpfully add a link to the Documentation Review Process when asking for a review, just in case the SME doesn't remember the steps or it's the SME's first time reviewing.

Parting Thoughts

At first, you might come up against a little reluctance from your coworkers about having to install extra software on their machines. Don't worry though, once you explain how simple it is to add comments directly to the Contributor file, they'll be on board, making the review process much more streamlined than any of the alternatives used in the past.

If you're already using these or similar tools at your office and are looking for a better review process, give this a shot. It's definitely helped us out in implementing a unified, quick and traceable review process that's easy to learn and follow.

Learn how to streamline your own review workflow. Get started with a free 30-day trial of MadCap Flare and MadCap Contributor, complete with access to our dedicated support team.